How I Learned to Stop Worrying, Love AI, and Be a Bit Less Responsible

In my last post, I summarized why I think it’s so damn hard to understand what’s happening with AI. It’s not that I haven’t been trying. These days, it seems like AI is the only thing I read about. We’re all blind men trying to understand space elephants, but that doesn’t stop the influencers from co-opting the moment for views, likes, and subscriptions.

I’ve read all the breathless posts about how AI is coming for all of our jobs and you better batten the hatches, cut your burn rate, and learn to use AI. But that strikes me as patently absurd, self-serving propaganda.

If AI is going to disrupt society as much as influencers claim, cutting the amount of money you spend per month and learning to prompt Gemini a bit better are the equivalent of trying to outrun an 80 mile-per-hour avalanche in 3 feet of powder while wearing ski boots. It ain’t happening.

If you keep wondering “what the hell is a person to do?” and you aren’t either a billionaire or ML genius, the best advice I’ve got is to basically do what you’re doing today, but err on the side of saying “yes” to more experiences. That’s it. Not very revolutionary, but I think it’s probably right.

If you buy that advice, then you can stop reading here. I just saved you 10 minutes of your life. You could use that time to go outside and do something enjoyable. You’re welcome.

But if your reaction is closer to “what the hell is this guy talking about, it’s the end of the world and he wants me to take a slightly longer vacation!?” read on for the detailed argument.

The Good Place

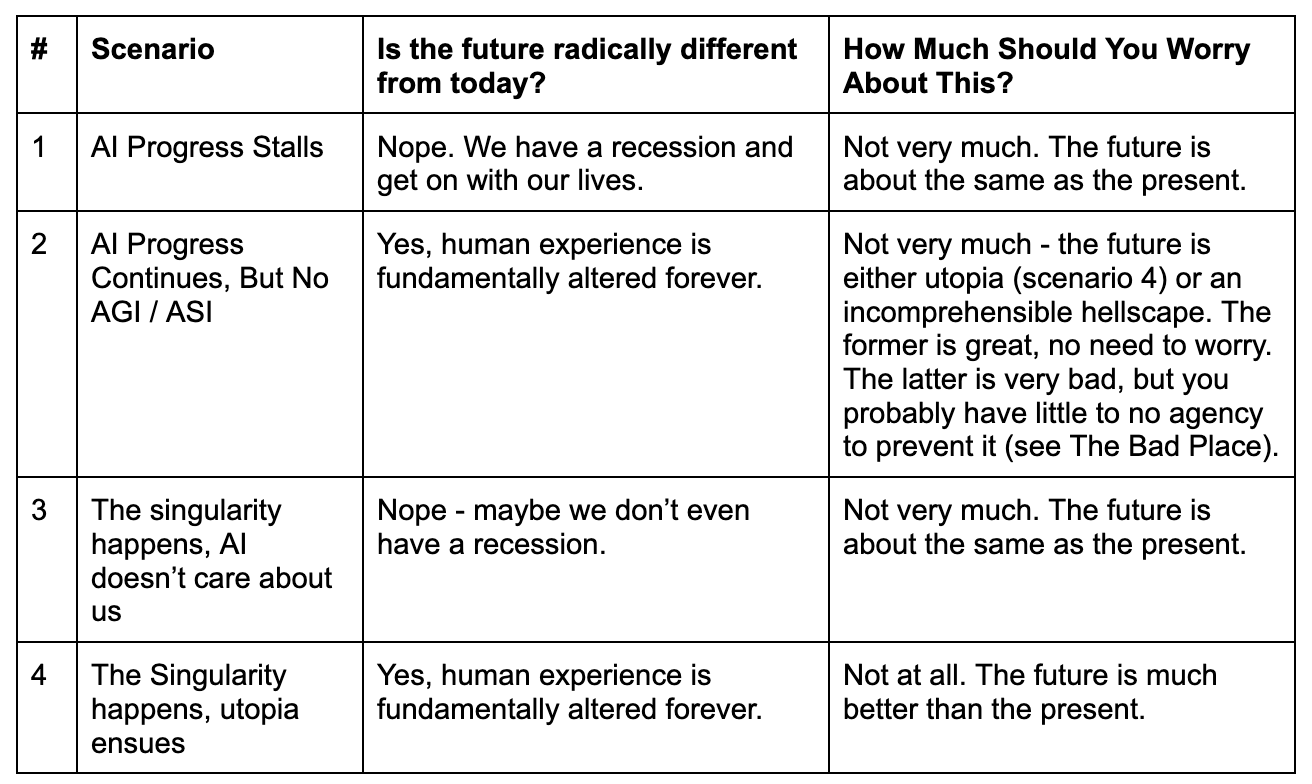

Let’s imagine 4 possible futures.

Scenario #1: AI Progress Stalls

Let’s not get hung up about how exactly this happens, but imagine that AI progress simply stops in some catastrophic and unpredictable way.

This would likely lead to a big economic recession as the AI bubble pops, but the world would continue to function much as it does today. We’ve experienced big recessions and depressions before. There would be a lot of misery, but it certainly wouldn’t change life as we know it.

Scenario #2: AI Progress Continues, But No AGI / ASI

This is potentially even more of an incongruous thought proposal, but imagine that AI capabilities continue to advance, but we don’t get AGI / ASI. For instance, imagine that we have models capable of long-running reasoning with virtually no hallucinations and robots with high dexterity and we get all that with compute costs that approach $0.00 per token.

Once you think through this, I think the outcomes look almost indistinguishable from either The Bad Place or The Good Place scenario 4; here’s why.

If there were essentially free, smart, capable, and physically embodied AIs, then whichever human wields them would become very powerful very quickly. They could leverage the nearly infinite intelligence to suppress dissent, uproot every last dandelion on the planet, or do any other whim that strikes their fancy. (Remember they don’t just have thinking models, but robots to translate digital thoughts into physical action!) Nobody could oppose them because even a slight imbalance in initial resources could be compounded by having your AI agents work to self-improve and cement your advantage.

So then the outcome of humanity just boils down to what that human overlord wants to do. If they only want to subjugate and control everyone else, we get The Bad Place (see below). If they want to make humanity happy, we get The Good Place scenario 4 (see below). The only distinction in either case would be the substrate that is actually making the decisions. If it’s an AI, it’s electrical impulses on a silicon wafer. If it’s a human, it’s electrical impulses moving across brain tissue.

Scenario #3: The Singularity Happens, AI Doesn’t Care About Us

If this happened, there would be an adjustment (possibly big recession) after the AI’s intentions become clear and humanity adapts to essentially being ghosted by the super intelligence we created. This is what we see in the movie “Her.” It’s an unsatisfying end, but like scenario #1, daily life for most people probably doesn’t change all that much.

Scenario #4: The Singularity Happens, Utopia Ensues

Here, let’s assume that the singularity happens and - for whatever reason - the AI loves humanity, perfectly understands our individual needs and desires, and proceeds to craft perfect worlds for each of us. We all get to live infinitely long, infinitely meaningful lives based on our own personal value systems.

Why You Don’t Need To Worry About Any of This

I don’t think anyone needs to lose sleep about these scenarios:

The Bad Place

This is the scenario that all the social media pundits are talking about. The most newsworthy and hyperbolic of these accounts has our society rapidly and unceremoniously disintegrating starting sometime next year (or maybe next month, or next week – the timelines keep being revised forward).

But no matter how fun it is to ridicule hyperbolic AI doomsday predictions and the Friendman-unit timelines on which they are supposed to occur, I think the predictions seem at least plausible.

If these predictions come to pass, almost nothing about the future would resemble the present. And I want to really press on this point because I think the horror of it gets lost in the TikTok reels and soundbites.

Your mortgage, kids, community, school, car, house, 401k, hobbies, health problems, memories, family history, tastes, skills, lovers, and enemies will all be rendered meaningless in a very short period of time. Nobody knows exactly what happens afterwards, but given how delicate our species is, you can easily imagine that the overwhelming majority of possible futures aren’t conducive to meaningful human life.

In short, if you take Dario or Altman at their word about their AI takeover future, it’s so impossibly bad for most people that it barely merits consideration. The fact that people don’t regularly point this out is, I think, due to not having thought through the second and third-order effects combined with a rejection of catastrophic thinking.

Unfortunately, just because something seems catastrophically bad, doesn’t make it untrue or less likely.

What To Do If You’re a Billionaire or ML Genius

Up to this point, I’ve assumed that you, the reader, are a fairly average human like me. You might live in a 3 bedroom duplex in Chicago with your two kids. Maybe you’re a high school student pondering the meaninglessness of learning calculus in the age of AI. Maybe you’re about to retire in that beach home you bought in South Carolina in 2006 and just can’t wait to stop getting corporate email. That’s fine, I’m there with you. Most of us have almost no ability to influence big trends like AI.

But if you happen to be a billionaire investor or AI wunderkind, I need to add an appeal here. You have a rare degree of agency. Things are getting scary for a lot of people. Do the right thing and try to stabilize the situation. The argument about AI takeover not being a terrible thing for humanity is a philosophical farce.

Let me reiterate that point: there’s nothing noble, interesting, or good about AI destroying humanity. I’m willing to die on this hill, so feel free to object in the comments and we can have a good old fashioned flame war.

If you do fall into this category, I don’t have a great, tactical next thing you can do, unfortunately. But if you’re a billionaire of ML genius, please use your money or smarts to introspect and figure out a way to help your fellow human. My kids will thank you.

Advice For The Rest of Us

Okay, back to our regularly scheduled program.

If you’re a normal person, I suggest the opposite of the now-standard “batten the hatches and learn to vibe code” approach. The only scenario in which any of us normies have any chance to exercise agency in the future is if AI unexpectedly and unpredictably implodes.

That’s it. Every other scenario leads to inexorable forces circumscribing our future in massive and unpredictable ways.

I don’t think embracing nihilism and hedonism work (see below), so here’s my super boring advice: YOLO a bit.

Why Nihilistic Hedonism Doesn’t Work

Let’s say that you know beyond a shadow of a doubt that the world will blink out of existence in exactly 3 years. Ignore for the moment how you know this, let’s just assume you are absolutely, unshakeably convinced of this fact. This is going to happen with 100% probability. Not 98% or 99.99% probability. It is a foregone conclusion - 100% certainty.

What would you do with your remaining time?

Most people’s first response is to go all in on hedonism. For me, that would probably be eating nothing but candy and watching movies. That sounds pretty great on the surface. I love candy and I love movies.

But if I ate nothing but candy for even an afternoon, I’d get a splitting sugar headache and my body would feel terrible. I’d probably throw up and if I kept at it, I’d probably develop diabetes pretty quickly and then I’d be in constant agony as my body slowly dies. Lovely!

And even if I girded my proverbial loins and kept pounding down the Twizzlers while watching The Wire on repeat, the people who love me would be hurt. My kids would wonder why dad doesn’t want to play with them. My wife would be devastated and wonder if I’d had a mental breakdown. My parents would be in tears trying to change my mind.

So paradoxically, my nihilistic attempt to live it up in my last months on earth would be extremely miserable. Oh, and don’t forget about the organ failure and diabetic coma.

There are only two scenarios in which leaning into nihilism actually works to improve your remaining time:

Most of the people in your life completely agree with your assessment about the doomsday timeline and its probability.

Most of the people in your life are completely unemotional about the loss of the world and their future.

If you were in an unusually well-aligned AI doomsday cult, didn’t have friends or family members outside of the cult, and are the sort of person who never re-examines or second-guesses your predictions, then you’ve got me: go buy a pallet of your favorite candy, a fridge full of insulin, and get watchin’.

For everyone else, you need an alternative.

Why It Makes Sense to YOLO A Bit

Let’s revisit the hypothetical from the last section, but with a twist. Let’s say that you are pretty sure that the earth will blink out of existence in 3 years. You aren’t 100% certain though, you’re only 90% certain. And you are surrounded by people who think the odds are more like 5%. You’ve also thought a bit about the second and third-order effects of embracing nihilism.

In this far more realistic hypothetical, the most logical way to maximize the enjoyment of your remaining time on earth is to do meaningful and joyful things at the margin.

Take a slightly longer vacation. Go skiing with your kids. Buy that bigger TV. Watch a play.

Doing those things won’t hurt the people who love you and it will make your life more enjoyable. If you were really confident that the future would be almost identical to the present and you like delaying gratification, then this might feel uncomfortable. That’s the beauty of this strategy!

If you’re right about the doom in the future, you did stuff that made you and those around you happy. By making the people around you happy, you probably make yourself happier still.

If you’re wrong, the bad thing doesn’t happen and you get to continue enjoying all the great things you built.

This is getting dangerously close to the schlock of Dead Poet’s Society, but there’s a reason stories like that resonate. There’s some truth in there.

In my own life, I’m planning a vow renewal party with my wife. We’re doing a big road trip with the kids this summer. I just bought a big stupid overlanding van.

So that’s the best I’ve got. I’ve spent 1,750 words making the argument that the AI doomsday is best dealt with by doing what you learned in elementary school. Be kind to yourself. Love the people around you. Try to say “yes” to a couple more things.