Space Elephants Across the Universe: Why Nobody Knows What's Going On With AI

Image from Midjourney of course.

The Original Parable

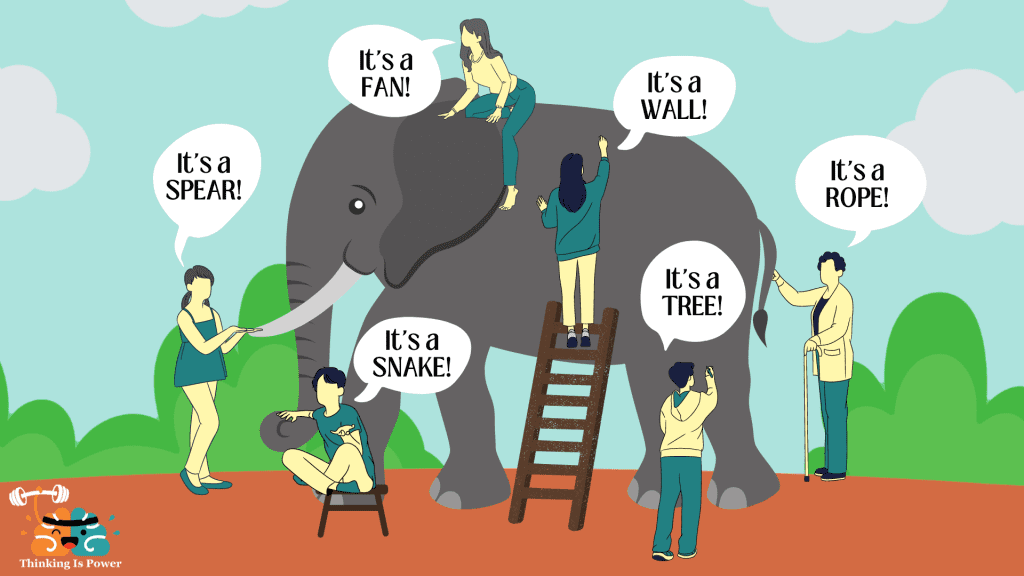

Maybe you’ve heard the parable of the blind men and the elephant. It’s about a bunch of blind guys who are invited to touch an elephant and describe it. Because the elephant is so large and various parts feel so different, the blind folks cannot agree on what they are touching. In some versions, they come to blows over their different impressions. You may have seen pictures like this illustrating the lesson:

It’s not a bad metaphor to illustrate why it’s so hard to discuss AI in early 2026.

There are people out there today who are using the Gemini 2 free plan on a 6-year old Android device on spotty internet with no prompting experience. These folks have heard that AI can solve any problem, replace any human, and do it instantly. They give it a shot, are underwhelmed, and conclude that AI is overhyped.

On the opposite end of the spectrum, there are people using the latest desktop computer with 5 external monitors on a 10GB fiber line who instantly adopted Claude 4.6 Opus when it was released, have built multi-agentic frameworks to write code in parallel, and whose area of expertise is well-served by current AI coding tools. To them, current-gen AI models are not just competent and under-hyped, they represent an existential risk to their livelihood.

You probably fall somewhere between these extreme stereotypes. All of these variables (hard, connectivity, model choice, prompting experience, and problem domain) help to explain why sober-minded people might use the same technology and draw opposite conclusions about its utility.

That would make it difficult enough, but the situation we find ourselves in today is much more confusing.

The Original Parable No Longer Works

In the original parable, there is a single elephant that is being touched by several blind men. Despite their different points of reference, they are still observing the characteristics of a single mammal at a single point in time. But AI technology today is evolving so rapidly that this doesn’t really work.

Instead of a single elephant being investigated by a team of patient blind men, we have to conjure a far more bizarre situation to understand what’s happening today. The easiest way I can think of making the point is to talk about space elephants and the speed of light.

Buckle up, it’s about to get weird.

Space Elephants Across the Universe

A real photo? C’mon. It’s 2026. That’s all AI slop, friend.

Imagine that instead of a single elephant and a single pack of handsy blind men, we have a dozen elephants that are placed at random across planets outside of our solar system, but within 50 light years of earth. Each elephant is accompanied by a single blind man. Let’s assume they can survive indefinitely on their planets.

Just like in the original parable, each blind man has been instructed to describe what he is touching and send their findings to you. Let’s assume you have the above pictured radio telescope array to receive their messages.

You haven’t been told what the blind men are touching. Your goal is to piece together the fragments of their descriptions and puzzle it out. But because these planets are very far from you, at different starting distances, in constant motion, and each planet has only a single blind man investigator, the task is much harder. Let’s see how this might play out.

The nearest planet to earth outside of our solar system is Proxima Centauri, which is 4.2 light years away. Let’s say one of the elephant / blind man pairs gets lucky and is placed there. You would receive their transmission first because of how close they are.

But as soon as you had received the report, it is hopelessly out of date. The elephant and the blind man are now 4.2 years older than they were when they sent the message. And if you respond, 8.4 years will have elapsed since they sent their first report. For some teams on extremely distant planets, by the time you get their message, both the sender and the elephant will have died. This is the situation that we find ourselves in today with AI.

We are all trying to describe a highly complex phenomenon with limited information and arbitrary constraints placed on our ability to comprehend it.

Cutting Through the Noise

If you’ve gotten this far, you’ve probably bought the argument that it’s very difficult for any one person to comprehend the details of what’s happening with AI. There’s just too much happening too fast.

But even if you can’t know all the details, it seems fairly reasonable to me that AI is pretty scary and it’s getting scarier at an ever-increasing rate. AGI or ASI could exist right now – the very moment you are reading these words – but it might just be happening elsewhere to other people and hasn’t reached you yet. You wouldn’t even know that you don’t know until that information crashes into your reality.

In the meantime, posts on Hacker News and Reddit from other people will seem increasingly out of touch with the reality you are experiencing.

In my next post, I’ll cover what I think a rational actor can actually do about any of this, but for now, I’ll just leave you with the image of relativistic space elephants to convey the point that making predictions about AI is really damn hard right now.